_o_ops_!_ Predicting Unintentional Action in Video

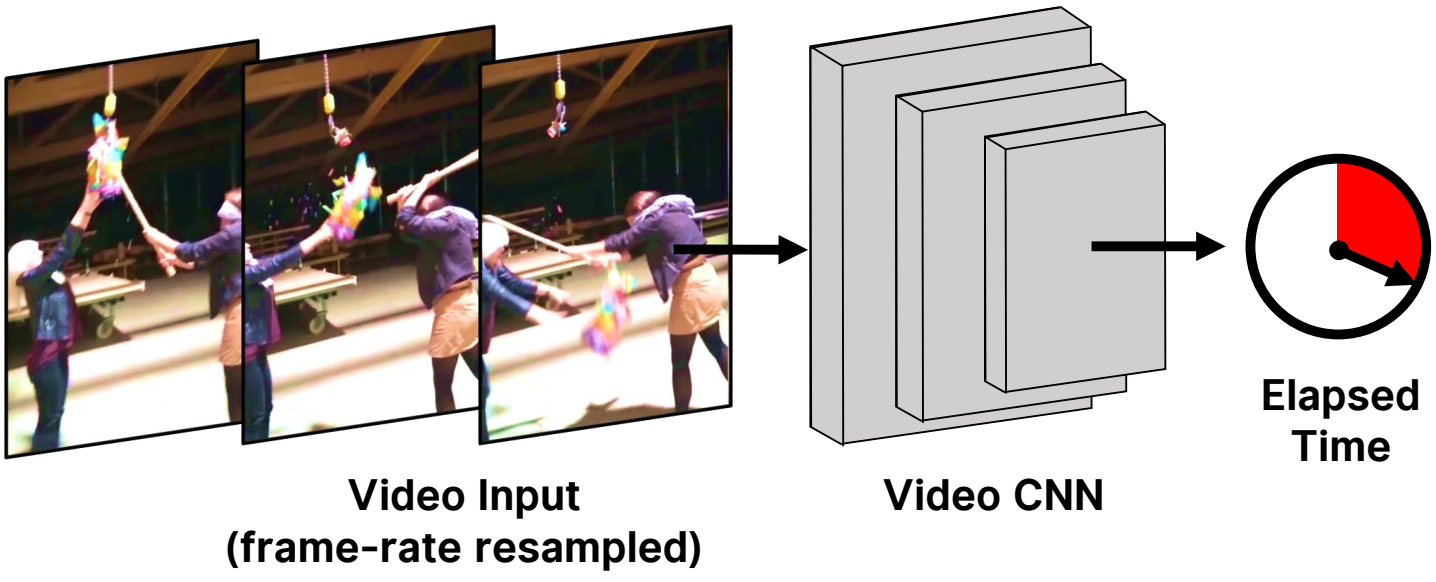

From just a short glance at a video, we can often tell whether a person's action is intentional or not. Can we train a model to recognize this? We introduce a dataset of in-the-wild videos of unintentional action, as well as a suite of tasks for recognizing, localizing, and anticipating its onset. We train a supervised neural network as a baseline and analyze its performance compared to human consistency on the tasks.

We also investigate self-supervised representations that leverage natural signals in our dataset, and show the effectiveness of an approach that uses the intrinsic speed of video to perform competitively with highly-supervised pretraining. However, a significant gap between machine and human performance remains.

Paper

Dataset

Analyzing human goals from videos is a fundamental challenge in computer vision. Since people are usually competent, existing datasets are biased towards successful outcomes. However, this bias for success makes discriminating and localizing visual intentionality difficult for both learning and quantitative evaluation.

We introduce a new annotated video dataset that is abundant with unintentional action, which we have collected by crawling publicly available “fail” videos from the web. The dataset is both large (over 50 hours of video) and diverse (covering hundreds of scenes and activities). We annotate videos with the temporal location at which the video transitions from intentional to unintentional action (shown in the video as OOPS!).

Explore datasetResults

Our trained model learns to localize the transition to unintentional action in video. Below, we show model outputs on a sliding window passed through the input. The predicted transition is at the location with highest probability of transition (shown in yellow).

Code

Acknowledgements

Funding was provided by DARPA MCS, NSF NRI 1925157, and an Amazon Research Gift. We thank Nvidia for donating GPUs. The webpage template was inspired by this project page.